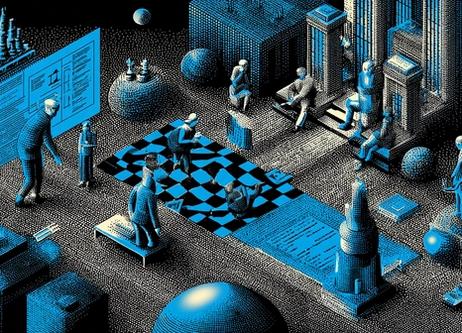

Implementing effective AI governance: framework, steering, and control

Building Effective AI Governance: Framework, Oversight, Control, Risk Management, Compliance, and Workflow Automation

Why AI Governance Is Becoming Essential in the Age of Automation

As artificial intelligence (AI) and workflow automation become major performance drivers, organizations face a critical governance challenge: how can they structure AI adoption in a way that is both agile and controlled? Regulatory requirements continue to intensify (including the upcoming European “AI Act”), while issues of trust, transparency, and algorithmic bias are growing.

According to a recent global survey, 64% of organizations say AI boosts innovation, but only 39% report a measurable impact on enterprise-level EBIT (source: McKinsey & Company).

AI governance is no longer optional — it is essential to move from experimentation to industrialization while maintaining control over risks. This article outlines a strategic framework to build effective AI governance: definition, structure, operational oversight, risk control, and real-world use cases.

Definition: What Is AI Governance?

AI governance refers to the processes, standards, and safeguards designed to ensure that AI systems are safe, ethical, operationally viable, and aligned with business goals (source: IBM).

Unlike data governance, which focuses on data quality, accessibility, and security, AI governance also covers: model design, deployment, monitoring, business impact assessment, bias detection, and compliance.

It fits naturally into automation and collaborative workflow environments: AI does not operate in a silo — it integrates into business processes where oversight and control are vital.

The Core Pillars of an AI Governance Framework

Strategic Alignment and Prioritization of Use Cases

Effective AI governance begins with identifying use cases truly aligned with the organization’s strategy: Which processes should be automated? What business value is expected? What is the feasibility?

Example: a company may manage AI as a portfolio of use cases, assessing each through a “value vs. feasibility” lens before deployment.

This prevents fragmented automation efforts and avoids low-value AI initiatives.

Risk Governance: Security, Bias, Compliance

AI systems introduce risks: algorithmic bias, model drift, privacy violations, malicious use. Governance requires risk mapping, pre-deployment assessments, and continuous monitoring.

Key principles outlined by international institutions — fairness, transparency, accountability, confidentiality, security — provide a foundational framework (source: Harvard Professional).

According to a recent report, 47% of organizations rank AI governance among their top five priorities, and 77% are actively building an AI governance program (source: IAPP).

Organizational Structure and Multi-Disciplinary Teams

AI governance requires cross-departmental collaboration: IT, Data, Legal, Compliance, Security, and business units. Oversight is often ensured through an AI Steering Committee or multidisciplinary governance board.

This structure ensures that AI governance becomes part of daily workflows and project management practices.

Without it, governance remains superficial and non-operational.

Operational Governance: Processes, Tools, and Automation

AI Lifecycle Management: From Design to Monitoring

Effective oversight follows a clear cycle: design → training → validation → deployment → production monitoring → iteration.

Each phase requires documented controls (source: PwC).

For example, tracking data quality or model drift is essential for maintaining long-term reliability.

Automated Controls and AI Observability

To prevent governance from slowing innovation, organizations implement automated monitoring workflows, alerts, and quality checks.

Automated AI governance makes it possible to detect anomalies quickly and ensure continuous compliance (source: Databricks).

Integrating a creative project management platform (such as MTM) supports deliverable tracking, model versioning, collaborative workflows, and asset archiving — making AI governance more traceable and efficient.

Managing Non-Human Identities (NHI) and Access

With the rise of AI agents, controlling non-human identities (NHI) is becoming a core governance issue.

Organizations must manage permissions, authentication, traceability, and actions performed by AI agents within automated workflows.

Asset Management, Versioning, and Documentation

AI models are assets — they require documentation, version control, annotation, and structured review processes.

MTM facilitates this through centralized asset management, validation workflows, and historical archiving.

This ensures full traceability and supports future audits.

Control and Compliance: Securing AI Usage

The Regulatory Landscape: AI Act, ISO Standards, OECD Principles

Key governance frameworks include the OECD AI Principles, ISO 42001, and the NIST AI Risk Management Framework (source: Bradley).

These frameworks mandate transparency, impact assessments, auditability, and structured data/model management.

Organizations must adapt their governance practices to remain compliant.

Internal Audits and AI Maturity Scoring

Assessing the maturity of an AI governance program requires KPIs such as data quality, detected bias, compliance level, incident tracking, and model drift (source: Alation).

Regular audits enable process refinement and help quantify the governance program’s business value.

Security and Access Management

Cybersecurity must be central: access to data, models, and AI agents requires rigorous oversight.

Without strict access governance, automated AI workflows can introduce significant vulnerability.

Building Sustainable AI Governance to Master Automation and Create Value

AI governance and automation are no longer separate initiatives — they now sit at the heart of workflow planning and creative project management.

By applying a structured framework — strategy, operational oversight, and control — organizations can convert AI into a measurable value driver while effectively managing risks.

With asset management, versioning, collaborative workflows, and automation (as enabled by MTM), AI governance becomes a long-term competitive advantage.

It is time to move from fragmented pilots to scalable, sustainable, and effective AI governance.

FAQ – Frequently Asked Questions About AI Governance and Automation

1. How can an organization define a simple AI governance framework?

Align AI use cases with business strategy, identify risks, set up a cross-functional AI committee, and formalize processes and KPIs.

2. What risks need to be controlled in an AI project?

Bias, model drift, privacy breaches, unauthorized access, malicious use, and regulatory non-compliance.

3. How can AI system oversight be automated?

Through automated monitoring workflows, version control, continuous quality checks, and pre-deployment validation gates.

4. Which teams should participate in AI governance?

Data/AI teams, IT, Legal, Compliance, Security, Business Units, and Executive management.

5. Why is documenting AI models important?

To ensure traceability, support audits, understand decisions, detect model drift, and meet regulatory requirements.